In Short

- Googlebot crawls only the first 2MB of uncompressed HTML per page.

- Content beyond that limit may not be processed or indexed.

- There is no warning in Google Search Console when a page is truncated.

- Large e-commerce category pages and page-builder-heavy templates are most at risk.

- XOVI’s Site Audit can help identify oversized pages as a starting point for structural review.

Google’s official Googlebot documentation has been updated to clarify something that affects how pages are indexed: when crawling for Google Search, Googlebot processes only the first 2MB of uncompressed HTML. Once that limit is reached, Googlebot stops fetching. Only what was downloaded gets considered for indexing.

There is no warning. No error code. No signal in Search Console.

This is not a new penalty or ranking update. It is a documented technical constraint that has real implications for agencies managing sites with complex page structures.

What the Documentation Actually Says

Google’s official Googlebot documentation now explicitly states that the 2MB limit applies to uncompressed HTML and other supported file types. If a page exceeds 2MB of uncompressed content, Googlebot stops at the cutoff point and indexes only what it received.

The key word is uncompressed. Gzip compression reduces the size of files in transit, but Googlebot evaluates the decompressed HTML. A page that transfers as 300KB can easily expand to 2MB or beyond once decompressed, depending on how the HTML is structured.

Which Page Types Are at Risk

Most pages on most sites are well under 2MB. This is a non-issue for a standard blog post or a product detail page with clean markup.

The risk concentrates in specific page types that agencies regularly manage for clients.

E-commerce category pages

A category page pulling 200+ products, with full product descriptions, schema markup, and inline filtering logic embedded in the HTML, can approach or exceed 2MB quickly. If Googlebot stops reading at the limit, products and internal links at the bottom of the page may not be crawled.

Faceted navigation

Faceted filter combinations often generate significant HTML, especially when the page renders all possible filter states in the DOM instead of loading them dynamically. A filtered URL that includes hundreds of parameter combinations in the markup is a common candidate for oversized pages.

Page-builder-heavy WordPress builds

Elementor, Divi, WPBakery, and similar tools are known for generating verbose HTML. A single section built in a page builder can produce 10–20x the markup of hand-coded HTML achieving the same visual result. Pages assembled from dozens of builder sections accumulate that weight fast.

Large FAQ sections

FAQ content is often added incrementally, with a few questions each quarter and rarely audited for total page weight. A page with 80 expanded FAQ items, structured data markup, and a full site header and footer can exceed the limit without anyone noticing.

The Practical Risks

Content beyond the limit isn’t crawled

If Googlebot stops at 2MB, anything after that point isn’t considered for indexing. For a category page, that could mean the last 50 products on the page are invisible to Google, regardless of how well they’re written or priced.

Internal links after the cutoff aren’t discovered

Googlebot uses the HTML it receives to discover new URLs. If internal links to subcategories, product pages, or pagination appear after the 2MB threshold, those URLs won’t be found during that crawl. This can create gaps in crawl coverage without a visible cause.

Ranking instability without a clear signal

If a page is borderline, sometimes under the limit and sometimes over it depending on server side rendering or A B test variants, rankings may fluctuate without any identifiable cause. There is no Search Console report for truncated pages. You will not see an error. You may only see ranking variation with no clear starting point for diagnosis.

What Most Agencies Get Wrong

The assumption is that page speed audits cover this problem. They don’t.

Page speed tools measure file transfer size, not uncompressed HTML size. A page can pass Core Web Vitals, score 90+ in PageSpeed Insights, and still deliver 3MB of uncompressed HTML to Googlebot. These are separate measurements.

Similarly, a page can load in under two seconds for a user and still be oversized for Googlebot’s purposes. Performance and crawlability are related, but they are not the same thing.

How This Impacts AI Search

AI powered search systems, including AI Overviews and retrieval-based features, rely on structured and accessible HTML to interpret and surface page content. If a crawler cannot fully process a page, the downstream interpretation of that content may be incomplete.

This does not mean oversized pages are penalized in AI driven results. However, clean and fully crawlable HTML gives these systems more information to work with. A page truncated at 2MB is only partially read, and a partially read page may be inconsistently represented across search features.

Structural clarity supports consistent visibility, whether the system reading your page is a traditional index or an AI assisted one.

For agencies monitoring how AI systems describe and surface their clients, XOVI AI provides visibility into how brands, products, and services are interpreted across major AI platforms. Clean technical structure strengthens that interpretation layer. If foundational crawlability is limited, downstream AI visibility can also become inconsistent.

Keeping pages within crawlable limits is good practice for both traditional search and AI driven discovery.

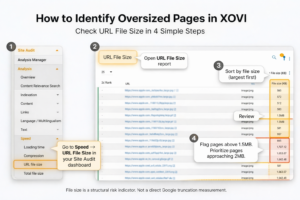

How to Check This in XOVI

This is where having structural visibility matters.

XOVI’s Site Audit includes URL file size reporting and file size distribution across audited domains. It’s a practical way to identify which pages may be generating excessive HTML and warrant a closer structural review.

XOVI does not directly measure Google truncation. File size as reported by a crawl tool is a useful proxy for identifying structural risk, not a guarantee of what Googlebot receives.

The goal is proactive diagnosis.

Step-by-step:

- Run a Site Audit on your target domain.

- Navigate to Speed → URL File Size.

- Sort URLs by size, largest first.

- Review any pages consistently above 1.5MB.

- Identify which template types are generating excessive HTML, such as category pages, builder templates, and FAQ hubs.

- Prioritize structural cleanup on pages approaching or exceeding 2MB.

Quick check (existing analysis)

If you already have an analysis running, you can also:

- Go to Site Audit → Analysis → Overview

- Click on Technology

- Look for the issue “High overall size”

This surfaces pages where file size may already be flagged as a technical issue and can be a faster starting point.

Flag pages above 1.5MB for review. That threshold gives your team a working buffer before reaching Google’s documented limit.

What to Fix If a Page Is Too Large

Once you’ve identified oversized pages, the fix depends on what’s generating the bulk. Common approaches:

- Reduce the number of products rendered per category page. Paginate earlier. Showing 50 or 100 products per page is more manageable than displaying 300 or more.

- Implement server-side filtering instead of embedding all filter states in the DOM.

- Remove duplicated or stacked schema markup blocks. Multiple overlapping JSON-LD blocks on one page add weight without benefit.

- Audit and remove unused builder elements. Page builders often leave hidden sections, empty rows, and redundant wrappers in the HTML even when they’re invisible to users.

- Replace large static FAQ dumps with expandable structures that load content progressively, keeping base HTML lighter.

- Review server-side rendering logic for pages where the rendered output grows disproportionately compared to what users actually see.

None of these changes are guaranteed to influence rankings directly. Structural size is not a ranking factor, but it can limit what Googlebot processes, which may affect indexation and crawl coverage.

The Takeaway

Google didn’t introduce a new rule. They documented a limit that exists. The agencies that are prepared are the ones that already know which client pages are pushing against that threshold.

Ready to Check Your Client Sites?

Run a Site Audit on your largest client domains and review file size distribution today. Structural issues compound quietly. Identifying them early gives you control.

New to XOVI?

You can test everything yourself.

Start a 14 day free trial. No credit card required.

Sign up and run your first Site Audit in minutes.

Frequently Asked Questions

Does Google index only 2MB of HTML?

According to Google’s Googlebot documentation, Googlebot processes only the first 2MB of uncompressed HTML when crawling a page for Google Search. Content beyond that threshold is not fetched and therefore not considered for indexing.

Does gzip compression affect the 2MB limit?

No. The 2MB limit applies to uncompressed HTML. Googlebot decompresses content before evaluating it. A page served as 400KB via gzip may expand to 3MB+ of uncompressed HTML, which would exceed the limit. File size as seen in your browser’s network tab or a speed tool reflects compressed transfer size, not the decompressed HTML that Googlebot evaluates.

How can I check if my page exceeds 2MB?

There is no direct signal in Google Search Console. The most practical approach is to use a crawl tool that reports uncompressed HTML size. In XOVI, the Site Audit’s URL File Size report provides per-URL file size data across your domain, which you can sort to surface the largest pages for review.

Does this impact Core Web Vitals?

Not directly. Core Web Vitals measure user facing loading performance, which depends on network transfer size, rendering, and resources, not the raw HTML size that Googlebot evaluates. A page can score well on Core Web Vitals and still exceed the 2MB uncompressed HTML threshold. These are separate measurements that address different aspects of page quality.

Does this affect AI search features?

Not as a direct penalty. But AI-powered search features depend on fully crawlable, structured HTML to interpret page content accurately. A page that’s truncated at 2MB may be incompletely represented in AI-driven results. Keeping pages within crawlable limits supports more consistent visibility across both traditional and AI-assisted search features.

XOVI’s Site Audit reports URL file size and page size distribution across crawled domains. Use it to identify oversized pages and structure your technical review.